About Client

AWS Compute & High-performance Computing

Tonkin + Taylor is New Zealand’s leading environment and engineering consultancy with offices located globally. They shape interfaces between people and the environment, which includes earth, water, and air. Additionally, They have won awards like the Beaton Client Choice Award for Best Provider to Government and Community-2022 and the IPWEA Award for Excellence in Water Projects for the Papakura Water Treatment Plan- 2021.

- https://www.tonkintaylor.co.nz/

- Location: New Zealand

Project Background

Tonkin + Taylor were embarking on launching a full suite of digital products and zeroed upon AWS as their choice for a cloud environment. Moreover, They wanted to accelerate their digital transformation and add more excellent business value through AWS Development Environment best practices. To achieve all this, we needed to configure AWS Compute & High-Performance Computing, following best practices and meeting compliance standards, which can serve as a foundation for implementing more applications. Furthermore, The AWS Lake House is a central data hub that consolidates data from various sources and caters to all applications and users. It can quickly identify and integrate any data source. The data goes through a meticulous 3-stage refining process: Landing, Raw, and Transformed. Additionally, After the refinement process, it is added to the data catalog and is readily available for consumption through a relational database.

Scope & Requirement for AWS Compute & High Performance Computing

The 1st Phase of the AWS Environment Setup discussed implementation as follows:

- Implement Data Lakehouse on AWS

Implementation

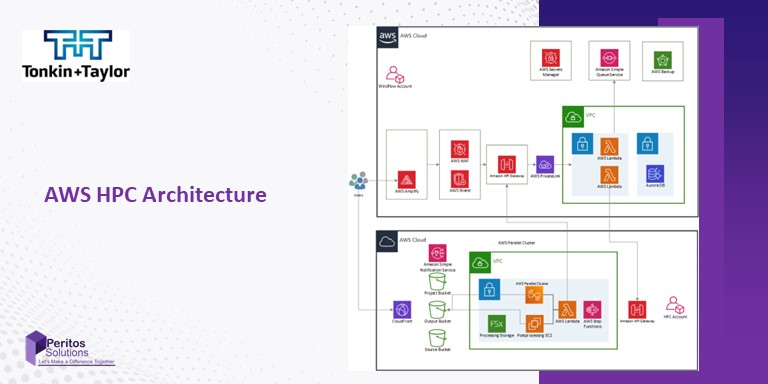

Technology and Architecture of AWS Compute & High Performance Computing

The 1st Phase of the AWS Environment Setup discussed implementation as follows:

Technology/ Services used

We used AWS services and helped them to setup below

- Cloud: AWS

- Organization setup: Control tower

- AWS SSO for authentication using existing AzureAD credentials

- Policies setup: Created AWS service control policies

- Templates created for using common AWS services

Security & Compliance:

- Tagging Policies

- AWS config for compliance checks

- NIST compliance

- Guardrails

- Security Hub

Network Architecture

- Site to Site VPN Architecture using Transit Gateway

- Distributed AWS Network Firewall

- Monitoring with Cloud Watch and VPC flow logs.

Backup and Recovery

Cloud systems and components used followed AWS’s well-Architected framework and the resources were all Multi-zone availability with uptime of 99.99% or more.

Cost Optimization

Alerts and notifications are configured in the AWS cost

Code Management, Deployment

Cloudformation scripts for creating stacksets and scripts for generating AWS services was handed over to the client

AWS Compute & High Performance Computing Challenges & Solutions

- Diverse data sources- Data Analytics and cleaning up and integration patterns to pull data from different data sources

- On-premise data connection to data lake migration- Site-to-site Secure AWS connection was implemented

- Templatized format for creating pipelines- Created scripts of specific format, Deployment scripts, and CI CD scripts

Support

Providing ongoing support as we are a dedicated development partner for the client

Next Phase

We are now looking at the next phase of the project, which involves:

- API and file-based data sources to be added

- Process data to be used in different applications for ingesting in other applications